5.8 KiB

OWLEN

Terminal-native assistant for running local language models with a comfortable TUI.

What Is OWLEN?

OWLEN is a Rust-powered, terminal-first interface for interacting with local large language models. It provides a responsive chat workflow that runs against Ollama with a focus on developer productivity, vim-style navigation, and seamless session management—all without leaving your terminal.

Alpha Status

This project is currently in alpha and under active development. Core features are functional, but expect occasional bugs and breaking changes. Feedback, bug reports, and contributions are very welcome!

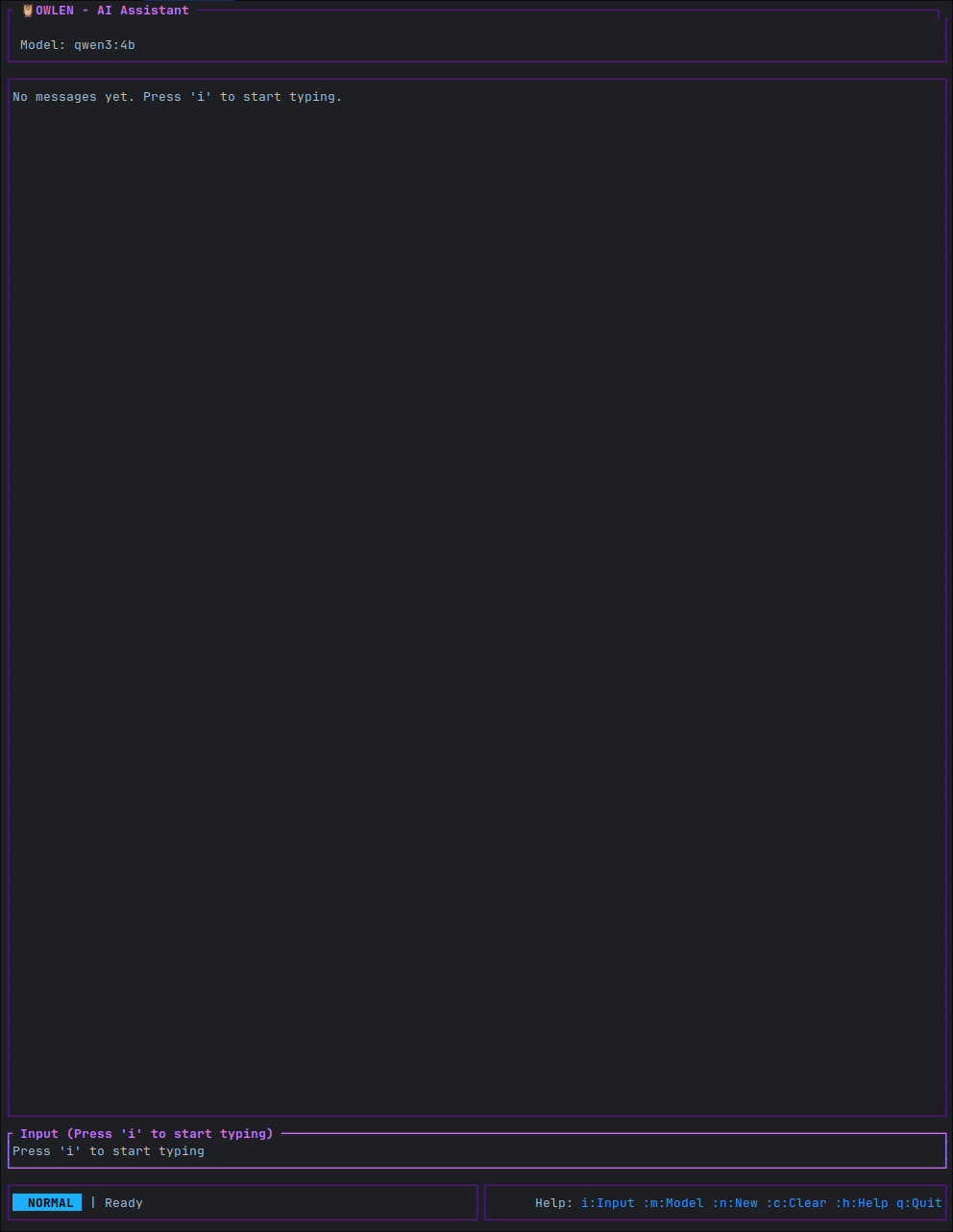

Screenshots

The OWLEN interface features a clean, multi-panel layout with vim-inspired navigation. See more screenshots in the images/ directory.

Features

- Vim-style Navigation: Normal, editing, visual, and command modes.

- Streaming Responses: Real-time token streaming from Ollama.

- Advanced Text Editing: Multi-line input, history, and clipboard support.

- Session Management: Save, load, and manage conversations.

- Theming System: 10 built-in themes and support for custom themes.

- Modular Architecture: Extensible provider system (Ollama today, additional providers on the roadmap).

- Guided Setup:

owlen config doctorupgrades legacy configs and verifies your environment in seconds.

Getting Started

Prerequisites

- Rust 1.75+ and Cargo.

- A running Ollama instance.

- A terminal that supports 256 colors.

Installation

Linux & macOS

The recommended way to install on Linux and macOS is to clone the repository and install using cargo.

git clone https://github.com/Owlibou/owlen.git

cd owlen

cargo install --path crates/owlen-cli

Note for macOS: While this method works, official binary releases for macOS are planned for the future.

Windows

The Windows build has not been thoroughly tested yet. Installation is possible via the same cargo install method, but it is considered experimental at this time.

From Unix hosts you can run scripts/check-windows.sh to ensure the code base still compiles for Windows (rustup will install the required target automatically).

Running OWLEN

Make sure Ollama is running, then launch the application:

owlen

If you built from source without installing, you can run it with:

./target/release/owlen

Updating

Owlen does not auto-update. Run owlen upgrade at any time to print the recommended manual steps (pull the repository and reinstall with cargo install --path crates/owlen-cli --force). Arch Linux users can update via the owlen-git AUR package.

Using the TUI

OWLEN uses a modal, vim-inspired interface. Press F1 (available from any mode) or ? in Normal mode to view the help screen with all keybindings.

- Normal Mode: Navigate with

h/j/k/l,w/b,gg/G. - Editing Mode: Enter with

iora. Send messages withEnter. - Command Mode: Enter with

:. Access commands like:quit,:save,:theme.

Documentation

For more detailed information, please refer to the following documents:

- CONTRIBUTING.md: Guidelines for contributing to the project.

- CHANGELOG.md: A log of changes for each version.

- docs/architecture.md: An overview of the project's architecture.

- docs/troubleshooting.md: Help with common issues.

- docs/provider-implementation.md: A guide for adding new providers.

- docs/platform-support.md: Current OS support matrix and cross-check instructions.

Configuration

OWLEN stores its configuration in the standard platform-specific config directory:

| Platform | Location |

|---|---|

| Linux | ~/.config/owlen/config.toml |

| macOS | ~/Library/Application Support/owlen/config.toml |

| Windows | %APPDATA%\owlen\config.toml |

Use owlen config path to print the exact location on your machine and owlen config doctor to migrate a legacy config automatically.

You can also add custom themes alongside the config directory (e.g., ~/.config/owlen/themes/).

See the themes/README.md for more details on theming.

Roadmap

Upcoming milestones focus on feature parity with modern code assistants while keeping Owlen local-first:

- Phase 11 – MCP client enhancements:

owlen mcp add/list/remove, resource references (@github:issue://123), and MCP prompt slash commands. - Phase 12 – Approval & sandboxing: Three-tier approval modes plus platform-specific sandboxes (Docker,

sandbox-exec, Windows job objects). - Phase 13 – Project documentation system: Automatic

OWLEN.mdgeneration, contextual updates, and nested project support. - Phase 15 – Provider expansion: OpenAI, Anthropic, and other cloud providers layered onto the existing Ollama-first architecture.

See AGENTS.md for the long-form roadmap and design notes.

Contributing

Contributions are highly welcome! Please see our Contributing Guide for details on how to get started, including our code style, commit conventions, and pull request process.

License

This project is licensed under the GNU Affero General Public License v3.0. See the LICENSE file for details. For commercial or proprietary integrations that cannot adopt AGPL, please reach out to the maintainers to discuss alternative licensing arrangements.